Historians will remember 2022 as the year AI took the internet by storm. Algorithmic text and image generators—the likes of ChatGPT, MidJourney, and Jasper—exploded in popularity, spawning new internet trends, provoking fierce opposition from flesh-and-blood artists, and threatening the security of creative jobs (like mine). As 2023 dawns, it seems there is no stopping the onslaught of artificial intelligence, which steadily usurps more and more roles once reserved for humans.

What do I think about all this? Well, the world does not run according to my opinions, but for what it’s worth, I’m not comfortable with it in the slightest. Call me a luddite. The “disruptors” in Silicon Valley have an unnerving obsession with rendering the human race obsolete, and a sane society would have met their project with greater caution, or even an outright ban, instead of giving our future over to the machines.

Alas, we do not live in a sane society. Generative AI will be an important part of the landscape for the foreseeable future. So if we can’t get rid of this technology, what is it good for, and what roles can it legitimately fill? With a $10 monthly subscription to Midjourney, I tried to find some answers to those questions—here’s what I discovered.

Creating an image with Midjourney starts with a text prompt, which can range from very short to very long. Based on this prompt, the program trawls the vastness of the internet for similar imagery, and combines it together to produce pictures that can be further refined according to the user’s tastes. Keep in mind that it is not creating art, so much as remixing it. While all art is a matter of remixing, and I myself am a staunch defender of the derivative, this AI lacks any kind of imagination or creativity that humans would recognize—as such, it is completely dependent on preexisting content, and has no goals beyond what you set for it.

For each prompt, Midjourney generates four separate images on the same theme. These are not a final product, but rather a starting point for future iterations. Creating more variants off of an image is a way to steer an otherwise aimless, unthinking process towards a particular artistic vision. To get a sense of how this works, let’s take a look at the first prompt I attempted: “An astronaut explores an alien jungle planet.” After I submitted my request, Midjourney got back to me with a wide spread of ideas:

These were all rough sketches, but they had promise. The one on the bottom right was most to my liking, suggesting the kind of overgrown, fecund, ravenous jungle I was looking for, so I selected it for more iterations.

You can see that Midjourney had settled on the overall color and composition of the illustration, while still experimenting with different ideas for the astronaut and the exact contours of the alien undergrowth. Its insistence on putting a big green blob in the foreground was a little strange. Altogether, though, the bottom-right image looked pretty good, and I told the program to upscale that one into a full-sized product, rather than crank out four more variants. This was the final version:

It turned out… all right. It might have used some additional fiddling. While the atmosphere was very good, with suitable murk and admirable detail on the foliage, few things in the image had defined form, and the astronaut himself was horribly misshapen. Either the AI had serious limitations, or I had a lot left to learn. Maybe both.

Further experimentation revealed some pitfalls with prompt writing. While Midjourney doesn’t require a specific syntax for inputs, it does get tripped up easily, and its interpretations can vary wildly from what would seem natural to a human reader. Here’s a rather long prompt I submitted, in the hopes that more detail would lead to more disciplined image generation:

Military officer on the command center of a starship. He wears a black uniform with gold trimming, and a peaked cap. The bridge is cramped, crowded with crew, with many control panels, buttons, and switches. It has a utilitarian aesthetic, with many exposed wires and pipes in the ceiling. A hologram shows ships of an enemy fleet.

These were the results:

Clearly, Midjourney did not successfully parse my prompt, as it flat-out ignored details such as the other crewmembers and the hologram. Not all was lost, though. The images that came back were amazingly photorealistic, as if I were looking at a still from some forgotten work of ’80s sci-fi. The uniforms looked outstanding and the old-school computers really popped. It wasn’t quite what I’d ordered, but I was impressed all the same.

The next project was more ambitious. For this one, I had a very particular vision in mind, a mood of cozy nostalgia drawing from old paintings and daydreams of a half-vanished classical past, and I needed to communicate it to the AI without any mixups. I tried the following prompt:

Grassy hilltop in the Italian countryside; sunny weather; crumbling Roman ruins covered in vines; a young man and woman sit together on a toppled marble column, while sheep roam in the valley below

Unlike the previous prompt, the AI mostly knew what I was talking about, and the options on display stuck pretty close to what I had in mind. I only had to filter out a few duds before I landed on some very striking imagery. Here’s where I was after the first few iterations:

The overall composition was rich and evocative. It hit every note I was going for, from the vines to the sheep. Taking a closer look at the details, though, some things didn’t add up—such as the malformed, nigh-Lovecraftian statue, or the girl’s ambiguous number of legs. Midjourney was being a little wonky, and successive iterations only did so much to trim down the craziness.

I decided to start from the ground up. I went back to the first results and selected a different image as my basis, starting a new “family tree,” so to speak. The results were even more breathtaking this time around. Unfortunately, I still had to deal with certain… artifacts.

That sheep-man was not a one-time oddity, mind you. For whatever reason, Midjourney struggled with my description of sheep roaming in the valley below, and it was very insistent about inserting sheep or sheep-like creatures (or at one point, a dog) wherever it could. It was something I just had to deal with; maybe half of the outputs were reasonably sheep-free, and thus usable. With regard to the above image, I was able to excise the sheep-man after another iteration, which gave me this stunner:

Just look at that! Gorgeous, gorgeous, gorgeous. Easily my favorite of all the images I’ve generated so far. For once, the human figures had no freakish abnormalities1, and there were no obtrusive background details to spoil the mood. A success! Another stab at the prompt—this time, without any sheep at all—created one that was almost as good:

So I had some solid results, translating a mental image into a prompt, and a prompt into viable artwork. But I was also interested in a less literal approach. What if I just entered snippets of prose as-is, and watched what happened? Obviously, the AI cannot interpret fiction the way a human would, but it can pick up on specific details and moods in the writing—sometimes with surprising results. Take this passage from the novel I’m writing:

He dreamt that he was descending by parachute through the atmosphere of a new world, a vast panorama bursting with green, pockmarked by blue lakes and flocks of puffy white clouds. Sunshine warmed his scales. When he dropped through the clouds he was immersed in refreshing vapor, a cool mist extending formlessly in all directions, shrouding the universe in grey. Eventually he made landfall in a strange forest, and he did not remember what happened after that.

When I entered it into Midjourney, here’s what I got back:

Does it accurately represent the passage? No. But it does a great job getting across some of its salient ideas—falling, freshness, wetness, an experience like parachuting through an ocean. Strangely for an unfeeling AI, Midjourney conveys moods and impressions far better than it does technical details. While human figures and machines may end up looking a little odd, and come out downright mutilated in the worst cases, the atmosphere is almost always appropriate for the prompt, with vibrant colors and realistic lighting.

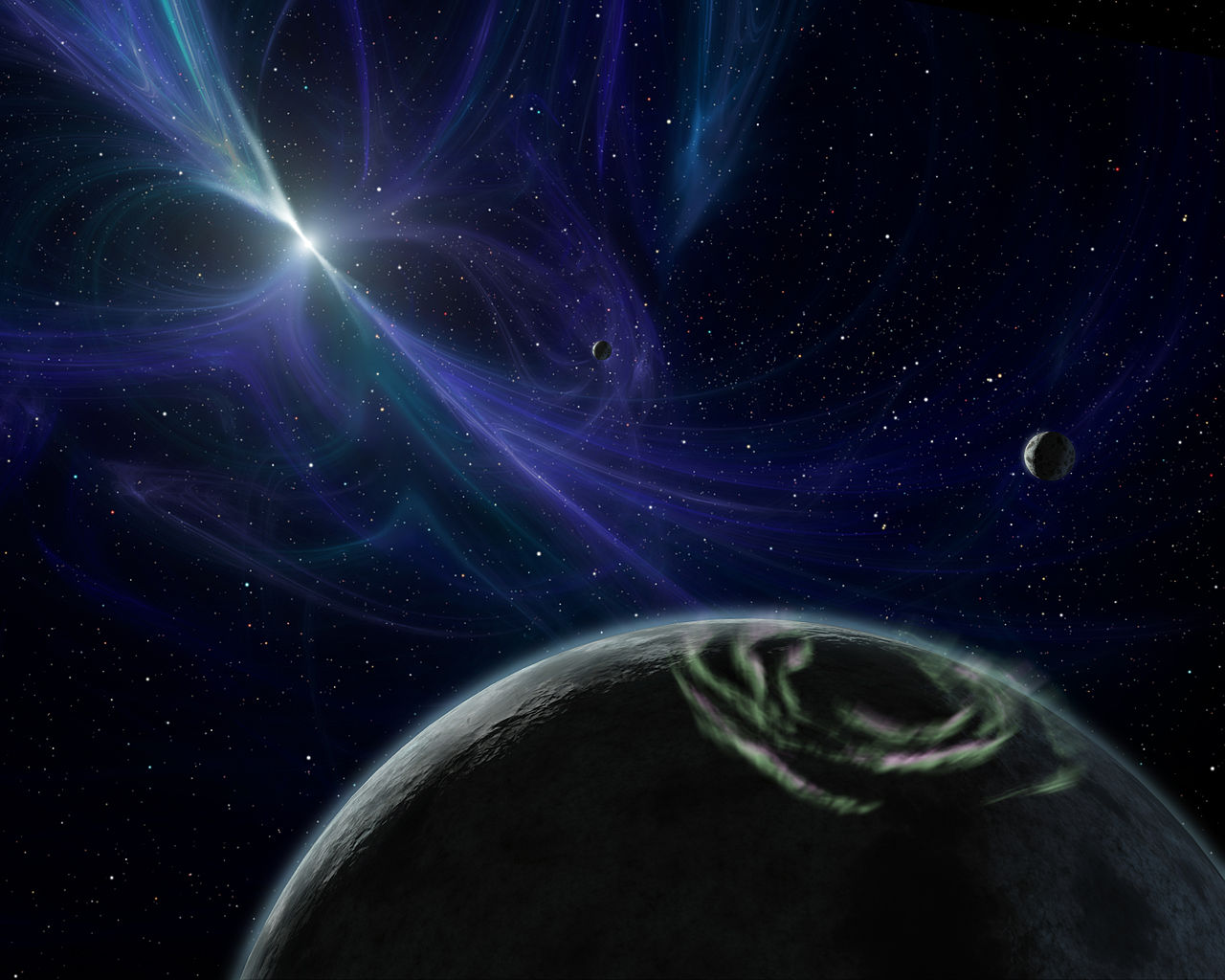

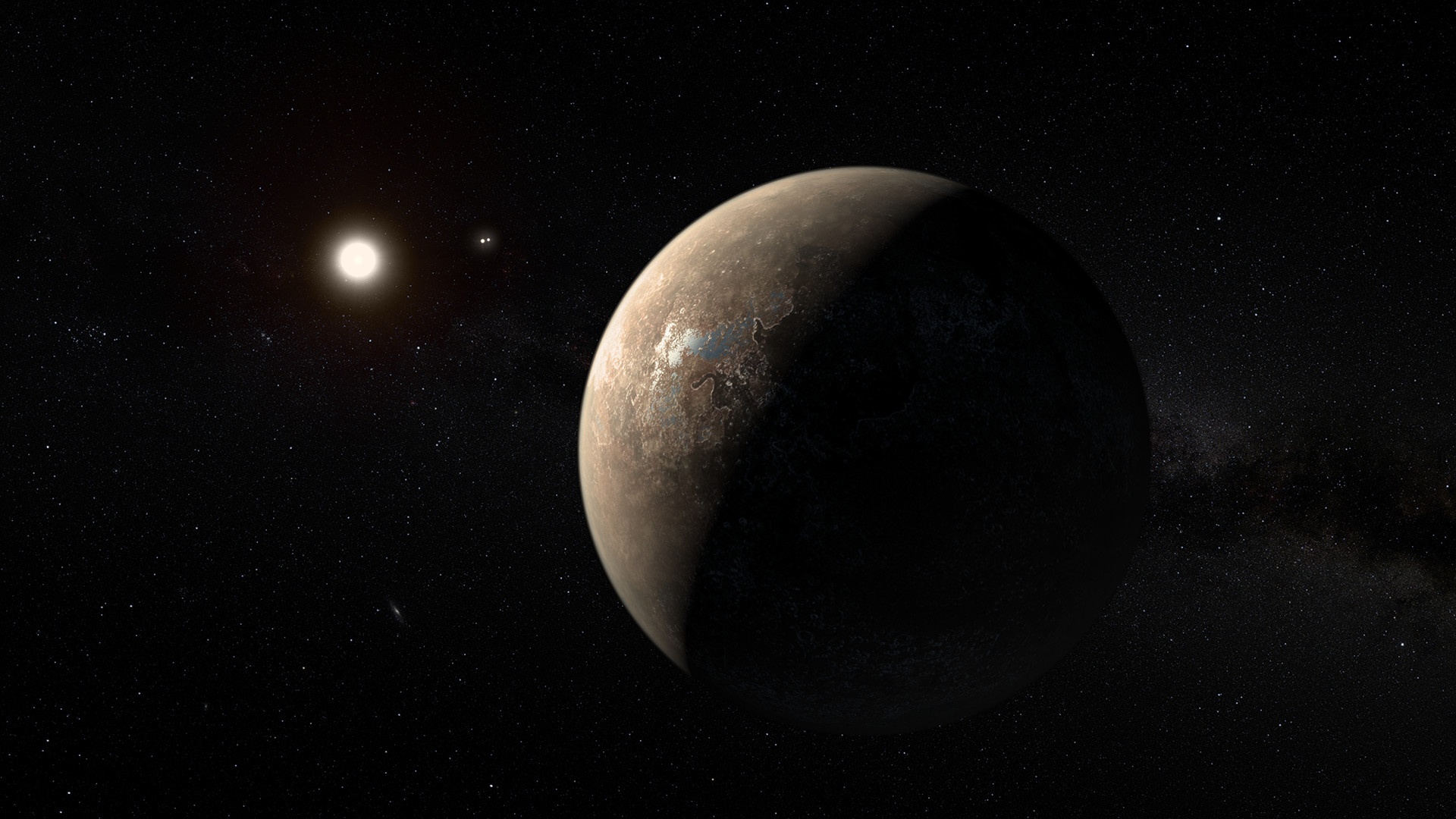

Midjourney is also very good at mimicking specific styles. Given my lifelong fascination with Soviet space propaganda, you can imagine how I put this to use:

Not bad. Not bad at all. While one of the planets in the first poster is trying very hard to be a face, and the “Russian” text is all gibberish, it’s clear that the AI has scanned through and successfully emulated a tremendous number of space-age cosmonaut posters. The deep reds and yellows are there; so is the energetic optimism, and the happy, forward-looking communists. All it needs is a bombastic caption: “Glory to the conquerors of space!”

So what’s my verdict? Despite its significant limitations, Midjourney is equal parts stunning and terrifying. It might have a hard time with distinct ideas—this program will never depict a scene quite to your specifications, and it would be hopeless designing, say, a fictional weapon or vehicle—but it remains a tool of unprecedented power, producing lavish illustrations at the push of a button.

I don’t think that ethical use of generative AI includes tasks you would otherwise pay artists for. Artists are still vital, they will always be vital, and the last thing we should want is a future where humans have outsourced their creative ambitions to the unthinking algorithm. In some areas, though, programs like Midjourney can fill an unmet niche. For example, one issue this blog has is a lack of “flavor images,” to break up long blocks of text on topics for which there are few public-domain pictures available. My solution so far has been to go without, or maybe shoehorn in some low-quality, barely relevant photos from Wikimedia Commons. So why not fill the pages with quick, flashy AI content? It would make my posts more interesting, and in this case it wouldn’t detract from anyone’s income, since I was using free imagery to begin with.

But we are playing with fire here. Given a few more years, what is now a quirky tool for pretty pictures may have disruptive and far-reaching effects. As this technology picks up steam, challenging human creativity in increasingly radical ways, we would do well to exercise caution—even if the pictures are very pretty.

- At least, none visible in passing. I do wish the eyes and hands had turned out better.

Discover more from Let's Get Off This Rock Already!

Subscribe to get the latest posts sent to your email.

Yes, Midjourney sucks at doing hands (:

Yes! One of my friends theorizes it’s because the AI is only imitating pictures of people at the surface level, without having any conceptual understanding of human form and structure—an extra finger here or there is no big deal to it, as long as it gets the broad strokes right.

I agree that Mj has no ‘conception’ of the human form, or any form, for that matter, and is only imitating, but, in the time I’ve been using it (about 4 months) I’ve seen it go from being useless at doing eyes, ears, noses, and hair, to be stunningly good at them. I find it odd it just has not made the same advances with hands… I mean, if it is just imitating/mimicking (which it is), how hard is it to mimic a correctly fingered hand, as apposed to an ear, or hair? There are even groups on the Discord Mj site that are deliberately trying to evolve Mj’s hand-drawing ability, but don’t seem to be having much luck. I’m sure it will eventually solve them (:

Interesting! I was unaware of the rapid pace of its progress—but it sounds like it will solve the hand problem in no time, if it’s already mastered what you would think would be even tougher challenges (especially hair and faces).

Intriguing exploration. Definitely echo the sentiments against ai.

Thank you! I see I’m not the only luddite around these parts, LOL.

Thanks Nic. Interesting AI -Generated Art work. I think you have hit right on the head – this could get out of hand,

Thank you! Glad you enjoyed the post—and that you agree that this tech is a little worrisome!

AI in the wrong hands can be disastrous – programmed for political gain or worse.

I see I’m outnumbered, but I’m actually a supporter of AI art. I think it’s because I’ve seen not just the bad but also the good of what happens when you get a technological tool lowering barriers to entry in an art field (in this case internet self-publishing for writing).

Besides, it’s here to stay. A weird analogy I have is free agency in sports. George Steinbrenner understandably opposed it at first, but then saw “Hey, I can take advantage of this”. Cue seven World Series titles.

Well, despite my philosophical and economic reservations, I agree that it’s here to stay! For my own site, it will be too helpful not to use in some cases.

I like your comparison to self-publishing—hadn’t thought of it that way before. But I think a major difference is that internet self-publishing mainly reduces barriers for distribution, whereas generative AI directly challenges the process of creation, devaluing it, and thus will have a much more profound impact. You’re right, though, that a world where the average Joe can produce stunning art would have its upsides. More people will be able to participate in creation and generate visually appealing imagery for their projects, which may indeed make the internet a lot prettier to look at.